2D-Cylinder(2D Flow Around a Cylinder)¶

# linux

wget https://paddle-org.bj.bcebos.com/paddlescience/datasets/transformer_physx/cylinder_training.hdf5 -P ./datasets/

wget https://paddle-org.bj.bcebos.com/paddlescience/datasets/transformer_physx/cylinder_valid.hdf5 -P ./datasets/

# windows

# curl https://paddle-org.bj.bcebos.com/paddlescience/datasets/transformer_physx/cylinder_training.hdf5 --output ./datasets/cylinder_training.hdf5

# curl https://paddle-org.bj.bcebos.com/paddlescience/datasets/transformer_physx/cylinder_valid.hdf5 --output ./datasets/cylinder_valid.hdf5

python train_enn.py

python train_transformer.py

# linux

wget https://paddle-org.bj.bcebos.com/paddlescience/datasets/transformer_physx/cylinder_training.hdf5 -P ./datasets/

wget https://paddle-org.bj.bcebos.com/paddlescience/datasets/transformer_physx/cylinder_valid.hdf5 -P ./datasets/

# windows

# curl https://paddle-org.bj.bcebos.com/paddlescience/datasets/transformer_physx/cylinder_training.hdf5 --output ./datasets/cylinder_training.hdf5

# curl https://paddle-org.bj.bcebos.com/paddlescience/datasets/transformer_physx/cylinder_valid.hdf5 --output ./datasets/cylinder_valid.hdf5

python train_enn.py mode=eval EVAL.pretrained_model_path=https://paddle-org.bj.bcebos.com/paddlescience/models/cylinder/cylinder_pretrained.pdparams

python train_transformer.py mode=eval EVAL.pretrained_model_path=https://paddle-org.bj.bcebos.com/paddlescience/models/cylinder/cylinder_transformer_pretrained.pdparams EMBEDDING_MODEL_PATH=https://paddle-org.bj.bcebos.com/paddlescience/models/cylinder/cylinder_pretrained.pdparams

| 模型 | MSE |

|---|---|

| cylinder_transformer_pretrained.pdparams | 1.093 |

1. 背景简介¶

圆柱绕流问题可以应用于很多领域。例如,在工业设计中,它可以被用来模拟和优化流体在各种设备中的流动,如风力发电机、汽车和飞机的流体动力学性能等。在环保领域,圆柱绕流问题也有应用,如预测和控制河流的洪水、研究污染物的扩散等。此外,在工程实践中,如流体动力学、流体静力学、热交换、空气动力学等领域,圆柱绕流问题也具有实际意义。

2D Flow Around a Cylinder,中文名称可译作“2维圆柱绕流”,是指二维圆柱低速定常绕流的流型只与 \(Re\) 数有关。在 \(Re \le 1\) 时,流场中的惯性力与粘性力相比居次要地位,圆柱上下游的流线前后对称,阻力系数近似与 \(Re\) 成反比(阻力系数为 10~60),此 \(Re\) 数范围的绕流称为斯托克斯区;随着 \(Re\) 的增大,圆柱上下游的流线逐渐失去对称性。

2. 问题定义¶

质量守恒:

\(x\) 动量守恒:

\(y\) 动量守恒:

令:

\(t^* = \frac{L}{U_0}\)

\(x^*=y^* = L\)

\(u^*=v^* = U_0\)

\(p^* = \rho {U_0}^2\)

定义:

无量纲时间 \(\tau = \frac{t}{t^*}\)

无量纲坐标 \(x:X = \frac{x}{x^*}\);无量纲坐标 \(y:Y = \frac{y}{y^*}\)

无量纲速度 \(x:U = \frac{u}{u^*}\);无量纲速度 \(y:V = \frac{v}{u^*}\)

无量纲压力 \(P = \frac{p}{p^*}\)

雷诺数 \(Re = \frac{L U_0}{\nu}\)

则可获得如下无量纲Navier-Stokes方程,施加于流体域内部:

质量守恒:

\(x\) 动量守恒:

\(y\) 动量守恒:

对于流体域边界和流体域内部圆周边界,则需施加 Dirichlet 边界条件:

流体域入口边界:

圆周边界:

流体域出口边界:

3. 问题求解¶

接下来开始讲解如何基于 PaddleScience 代码,用深度学习的方法求解该问题。本案例基于论文 Transformers for Modeling Physical Systems 方法进行求解,关于该方法的理论部分请参考此文档或原论文。接下来首先会对使用的数据集进行介绍,然后对该方法两个训练步骤(Embedding 模型训练、Transformer 模型训练)的监督约束构建、模型构建等进行阐述,而其余细节请参考 API文档。

3.1 数据集介绍¶

数据集采用了 Transformer-Physx 中提供的数据。该数据集中的数据使用 OpenFOAM 求得,每个时间步大小为0.5,\(Re\) 从以下范围中随机选取:

数据集的划分如下:

| 数据集 | 流场仿真的数量 | 时间步的数量 | 下载地址 |

|---|---|---|---|

| 训练集 | 27 | 400 | cylinder_training.hdf5 |

| 验证集 | 6 | 400 | cylinder_valid.hdf5 |

数据集官网为:https://zenodo.org/record/5148524#.ZDe77-xByrc

3.2 Embedding 模型¶

首先展示代码中定义的各个参数变量,每个参数的具体含义会在下面使用到时进行解释。

| examples/cylinder/2d_unsteady/transformer_physx/train_enn.py | |

|---|---|

3.2.1 约束构建¶

本案例基于数据驱动的方法求解问题,因此需要使用 PaddleScience 内置的 SupervisedConstraint 构建监督约束。在定义约束之前,需要首先指定监督约束中用于数据加载的各个参数,代码如下:

其中,"dataset" 字段定义了使用的 Dataset 类名为 CylinderDataset,另外还指定了该类初始化时参数的取值:

file_path:代表训练数据集的文件路径,指定为变量train_file_path的值;input_keys:代表模型输入数据的变量名称,此处填入变量input_keys;label_keys:代表真实标签的变量名称,此处填入变量output_keys;block_size:代表使用多长的时间步进行训练,指定为变量train_block_size的值;stride:代表连续的两个训练样本之间的时间步间隔,指定为16;weight_dict:代表模型输出各个变量与真实标签损失函数的权重,此处使用output_keys、weights生成。

"sampler" 字段定义了使用的 Sampler 类名为 BatchSampler,另外还指定了该类初始化时参数 drop_last、shuffle 均为 True。

train_dataloader_cfg 还定义了 batch_size、num_workers 的值。

定义监督约束的代码如下:

| examples/cylinder/2d_unsteady/transformer_physx/train_enn.py | |

|---|---|

SupervisedConstraint 的第一个参数是数据的加载方式,这里使用上文中定义的 train_dataloader_cfg;

第二个参数是损失函数的定义,这里使用带有 L2Decay 的 MSELoss,类名为 MSELossWithL2Decay,regularization_dict 设置了正则化的变量名称和对应的权重;

第三个参数表示在训练时如何计算需要被约束的中间变量,此处我们约束的变量就是网络的输出;

第四个参数是约束条件的名字,方便后续对其索引。此处命名为 "Sup"。

3.2.2 模型构建¶

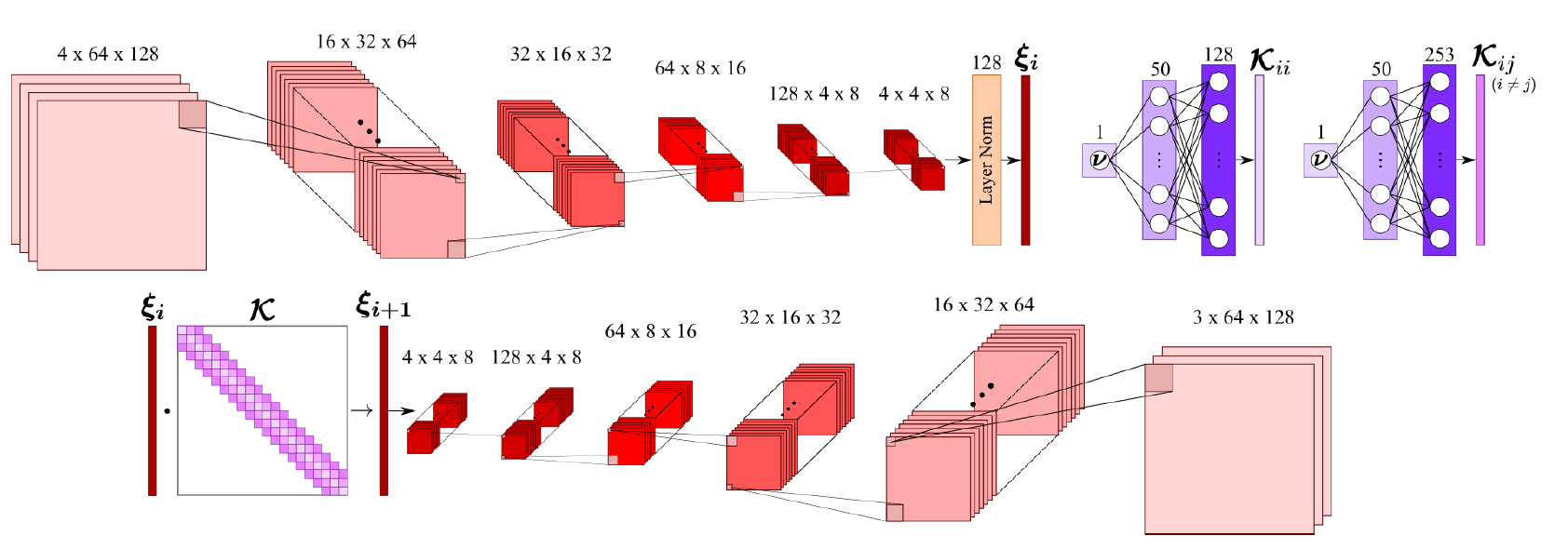

在该案例中,Embedding 模型使用了卷积神经网络实现 Embedding 模型,如下图所示。

用 PaddleScience 代码表示如下:

| examples/cylinder/2d_unsteady/transformer_physx/train_enn.py | |

|---|---|

其中,CylinderEmbedding 的前两个参数在前文中已有描述,这里不再赘述,网络模型的第三、四个参数是训练数据集的均值和方差,用于归一化输入数据。计算均值、方差的的代码表示如下:

3.2.3 学习率与优化器构建¶

本案例中使用的学习率方法为 ExponentialDecay,学习率大小设置为0.001。优化器使用 Adam,梯度裁剪使用了 Paddle 内置的 ClipGradByGlobalNorm 方法。用 PaddleScience 代码表示如下:

3.2.4 评估器构建¶

本案例训练过程中会按照一定的训练轮数间隔,使用验证集评估当前模型的训练情况,需要使用 SupervisedValidator 构建评估器。代码如下:

SupervisedValidator 评估器与 SupervisedConstraint 比较相似,不同的是评估器需要设置评价指标 metric,在这里使用 ppsci.metric.MSE 。

3.2.5 模型训练与评估¶

完成上述设置之后,只需要将上述实例化的对象按顺序传递给 ppsci.solver.Solver,然后启动训练、评估。

| examples/cylinder/2d_unsteady/transformer_physx/train_enn.py | |

|---|---|

3.3 Transformer 模型¶

上文介绍了如何构建 Embedding 模型的训练、评估,在本节中将介绍如何使用训练好的 Embedding 模型训练 Transformer 模型。因为训练 Transformer 模型的步骤与训练 Embedding 模型的步骤基本相似,因此本节在两者的重复部分的各个参数不再详细介绍。首先将代码中定义的各个参数变量展示如下,每个参数的具体含义会在下面使用到时进行解释。

3.3.1 约束构建¶

Transformer 模型同样基于数据驱动的方法求解问题,因此需要使用 PaddleScience 内置的 SupervisedConstraint 构建监督约束。在定义约束之前,需要首先指定监督约束中用于数据加载的各个参数,代码如下:

数据加载的各个参数与 Embedding 模型中的基本一致,不再赘述。需要说明的是由于 Transformer 模型训练的输入数据是 Embedding 模型 Encoder 模块的输出数据,因此我们将训练好的 Embedding 模型作为 CylinderDataset 的一个参数,在初始化时首先将训练数据映射到编码空间。

定义监督约束的代码如下:

| examples/cylinder/2d_unsteady/transformer_physx/train_transformer.py | |

|---|---|

3.3.2 模型构建¶

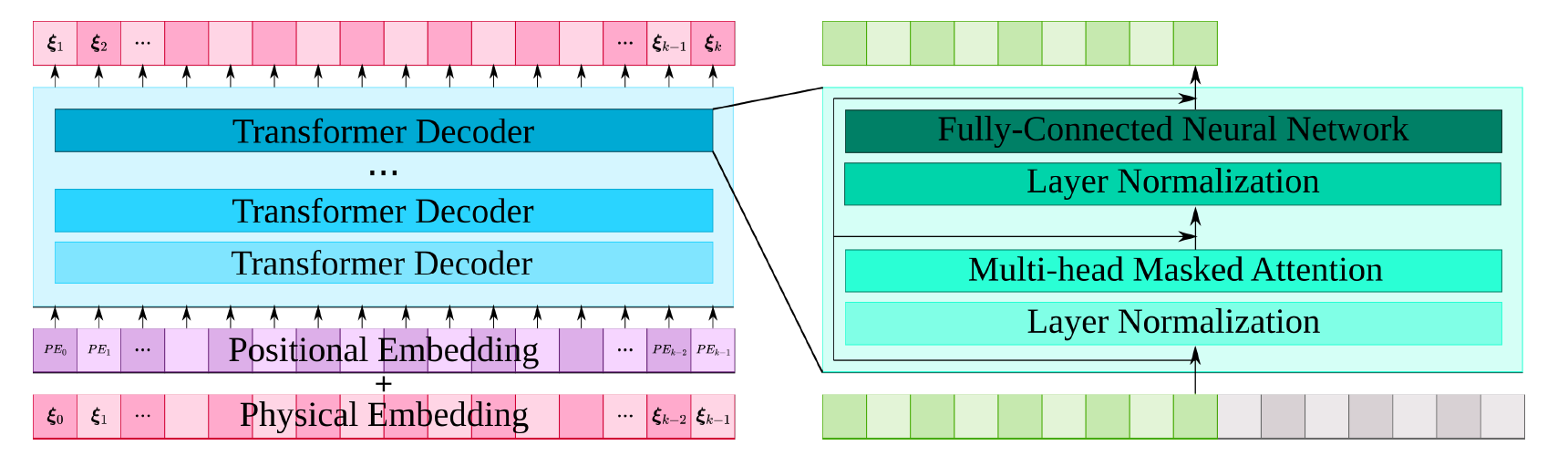

在该案例中,Transformer 模型的输入输出都是编码空间中的向量,使用的 Transformer 结构如下:

用 PaddleScience 代码表示如下:

| examples/cylinder/2d_unsteady/transformer_physx/train_transformer.py | |

|---|---|

类 PhysformerGPT2 除了需要填入 input_keys、output_keys 外,还需要设置 Transformer 模型的层数 num_layers、上下文的大小 num_ctx、输入的 Embedding 向量的长度 embed_size、多头注意力机制的参数 num_heads,在这里填入的数值为6、16、128、4。

3.3.3 学习率与优化器构建¶

本案例中使用的学习率方法为 CosineWarmRestarts,学习率大小设置为0.001。优化器使用 Adam,梯度裁剪使用了 Paddle 内置的 ClipGradByGlobalNorm 方法。用 PaddleScience 代码表示如下:

3.3.4 评估器构建¶

训练过程中会按照一定的训练轮数间隔,使用验证集评估当前模型的训练情况,需要使用 SupervisedValidator 构建评估器。用 PaddleScience 代码表示如下:

3.3.5 可视化器构建¶

本案例中可以通过构建可视化器在模型评估时将评估结果可视化出来,由于 Transformer 模型的输出数据是预测的编码空间的数据无法直接进行可视化,因此需要额外将输出数据使用 Embedding 网络的 Decoder 模块变换到物理状态空间。

在本文中首先定义了对 Transformer 模型输出数据变换到物理状态空间的代码:

| examples/cylinder/2d_unsteady/transformer_physx/train_transformer.py | |

|---|---|

可以看到,程序首先载入了训练好的 Embedding 模型,然后在 OutputTransform 的 __call__ 函数内实现了编码向量到物理状态空间的变换。

在定义好了以上代码之后,就可以实现可视化器代码的构建了:

首先使用上文中的 mse_validator 中的数据集进行可视化,另外还引入了 vis_data_nums 变量用于控制需要可视化样本的数量。最后通过 VisualizerScatter3D 构建可视化器。

3.3.6 模型训练、评估与可视化¶

完成上述设置之后,只需要将上述实例化的对象按顺序传递给 ppsci.solver.Solver,然后启动训练、评估。

4. 完整代码¶

| examples/cylinder/2d_unsteady/transformer_physx/train_transformer.py | |

|---|---|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 | |

| examples/cylinder/2d_unsteady/transformer_physx/train_transformer.py | |

|---|---|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 | |

5. 结果展示¶

针对本案例中的问题,模型的预测结果和传统数值微分的结果如下所示,其中 ux、uy 分别代表 x、y方向上的速度,p 代表压力。

创建日期: November 6, 2023